Will AI Get Better at Helping Make Music? A Forecast

Contact partnership@freebeat.ai for guest post/link insertion opportunities.

You’re staring at an unfinished loop at 1:17 a.m.—the drums hit, the bass is fine, but the chorus still doesn’t lift. You open an AI tool and ask for ideas, hoping it will “hear” what you mean. The real question behind will AI get better at helping with making music is whether AI will move from clever suggestions to reliable, musical partnership—without flattening your taste, your identity, and your rights.

The short forecast (2026–2030): yes, but “better” will mean more controllable

AI will get better at helping with making music, but not mainly by replacing artists. In practice, the biggest gains are coming from control, context, and workflow speed: tools that understand your project’s key, BPM, arrangement, stems, and references—and then make edits that survive real-world mixing and release standards. Research coverage from Carnegie Mellon suggests AI can generate compelling waveforms eventually, but current systems still lag humans on perceived creativity and novelty in listener judgments—an important clue about what “better” must target next: originality plus intent rather than just plausibility (CMU research).

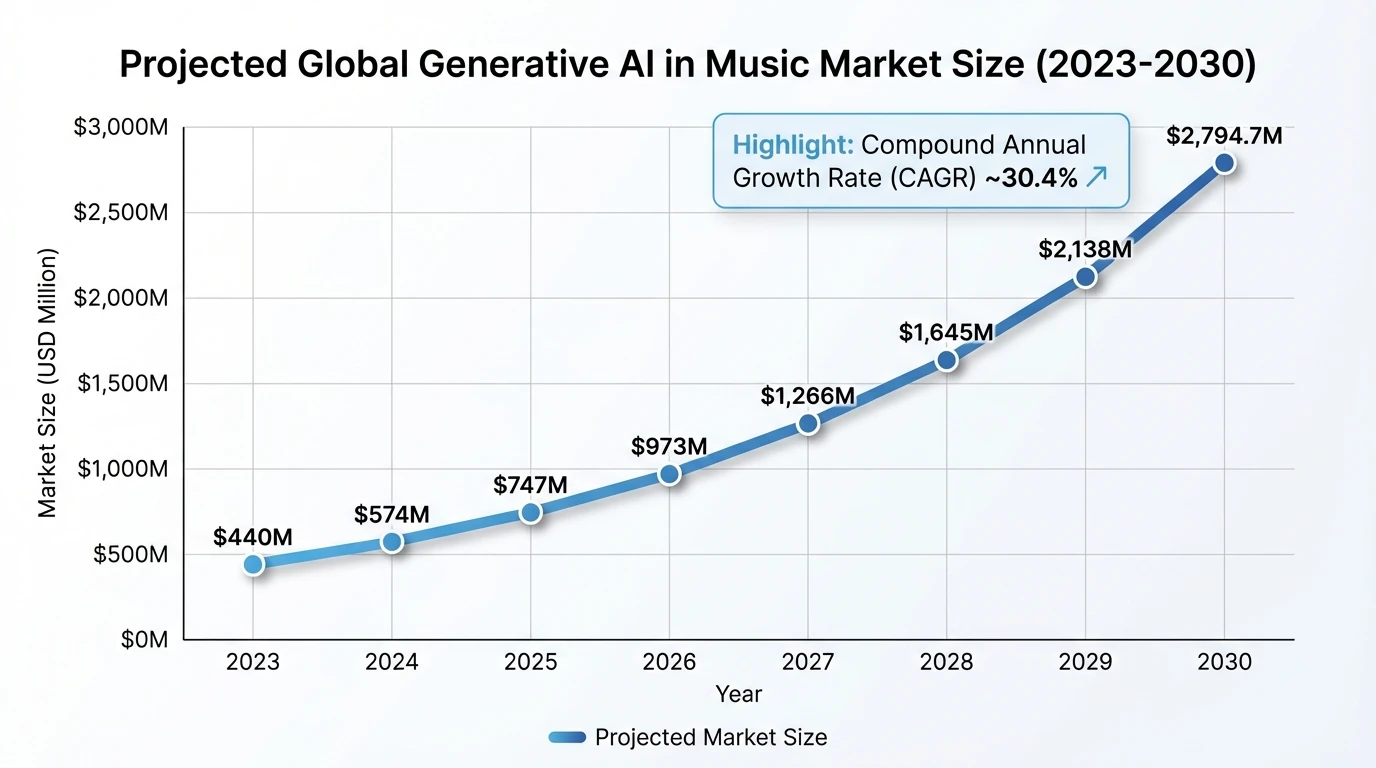

At the same time, markets typically don’t grow at a 30%+ CAGR unless the tools are rapidly improving or becoming much easier to adopt. Grand View Research estimates the generative AI in music market grows from $440M (2023) to $2.79B (2030) at ~30.4% CAGR, which usually correlates with better models, better UX, and more integration into creator pipelines (Grand View Research report).

What “better” will look like in music creation (not just generating songs)

When creators ask if AI will get better at helping with making music, they often imagine “type a prompt, get a hit.” In the studio, “better” usually means AI can do specific jobs with fewer artifacts, fewer wrong guesses, and more respect for your style.

Expect the most visible progress in:

- Co-writing support that follows constraints

- More accurate chord-scale alignment, melody contour options, and call-and-response phrasing.

- Better section-aware writing (verse pre-chorus chorus bridge) that doesn’t feel stitched together.

- Production help that sounds mix-ready

- Cleaner stem separation, better de-noise, smarter transient shaping, and more natural-sounding vocal editing.

- AI that can propose two mixes: “streaming safe” vs “club loud,” then explain what changed.

- Arrangement intelligence

- Tools that can detect drops, energy ramps, and pacing issues, then suggest edits you can audition instantly.

- Personal “artist DNA” systems

- Instead of generic outputs, AI will learn your palette (with permissions), so suggestions feel like your fingerprints.

Why AI improves fast in music: three technical tailwinds

From what I’ve seen testing AI music tools in real creator workflows, improvements come in bursts when three things click at once—data, models, and interfaces.

- Better audio understanding (structure, not just sound)

- The next wave isn’t only “generate audio.” It’s “understand form”: bars, downbeats, fills, tension, release.

- Multimodal learning

- Systems that connect text, MIDI, audio, and even video cues can align intent (“make it darker”) to real musical changes (mode mixture, orchestration, dynamics).

- Tooling inside workflows

- The winners will feel like instruments: fast auditioning, undo, A/B, and controls that map to producer language.

This is why platforms focusing on music-aware structure tend to feel more “useful” than pure prompt generators.

Where AI will still struggle (and why humans stay central)

Even as AI gets better at helping with making music, there are stubborn gaps:

- Taste is not the same as patterns

- Models can imitate; they can’t care which compromise you’re willing to make for your audience or message.

- Novelty with coherence

- A lot of AI outputs are “pleasantly correct.” Humans push one weird choice that becomes the hook.

- Long-range storytelling

- Great songs manage tension over minutes; many generators still drift or repeat without purpose.

- Accountability

- When something is emotionally off, artists diagnose why. AI often can’t justify choices in a way you can trust.

CMU’s reporting captures this well: AI-assisted music can be judged as less creative, and that gap matters because listener perception is the scoreboard (CMU research).

“Is AI good for music production?” Yes—when you use it like a power tool

AI is already good for music production when the task is narrow, testable, and reversible. I’ve personally had the best results using AI for idea expansion (alternate chord voicings, harmony stacks, drum variations) and time sinks (cleanup, separation, rough masters), then making human calls on hook, lyric truth, and arrangement risk.

Practical ways AI helps today:

- Songwriting

- Drafting variations (not final lyrics), rhyme options, melody scaffolds, chord substitutions.

- Sound design

- Generating textures, pads, one-shots, or “starter” patches you then shape.

- Mix prep

- Stem separation, de-click, noise reduction, timing alignment.

Industry tools are leaning into this “assistant” framing, including ethical frameworks and artist-centric systems aimed at preserving identity rather than replacing it (Soundverse overview).

A practical forecast by task (what improves first)

Music task

How AI helps today

What will improve next

Human role that remains hard to replace

Chord progressions & harmony

Suggests progressions, reharmonization ideas

Better style control, tension curves, voice leading

Taste, “why this chord here,” emotional intent

Melody & topline

Generates motifs and contours

More singable phrasing, better range constraints

Hook instincts, performer identity

Lyrics

Drafts themes, rhymes, rewrites

Stronger point of view consistency, fewer clichés

Lived experience, truth, subtext

Arrangement

Basic sections and build-ups

Section-aware energy design, better transitions

Storytelling, surprise, pacing risks

Mixing/mastering

Quick rough masters, cleanup

Context-aware mix decisions per genre/platform

Aesthetic judgment, reference matching, brand sound

Sound design

Generates textures and sample ideas

Better editability (macro controls, stems)

Curating signature sounds

The legal and ethical reality: “better” also means safer

A tool can be musically impressive and still be risky to use commercially. Two practical issues will shape how fast AI improves as a helper (not a gamble):

- Copyrightability and ownership

- In the U.S., the Copyright Office’s guidance has emphasized that purely AI-generated works may not qualify for copyright protection without human authorship—meaning “100% AI” can be a business problem, not a creative win.

- Training data and consent

- Expect more licensed datasets, provenance tracking, and clearer labeling, pushed by creators and organizations advocating for safeguards (see the Recording Academy’s policy discussions: Recording Academy on AI & copyright).

If you want a simple rule: the more you can document your human decisions (edits, selections, direction), the safer your claim to authorship becomes.

What the “30% rule for AI” looks like in music (a useful way to work)

People mention a “30% rule” in different contexts, but the most practical version in music is this: use AI to get the first 30% fast, then do the remaining 70% with human taste. That 70% is where identity lives—micro-timing, lyric specificity, arrangement risks, and performance choices.

A tight workflow that works:

- Prompt AI for 5–10 options (chords, drum patterns, toplines).

- Pick 1 direction and commit quickly.

- Human-edit aggressively: cut sections, rewrite lines, re-voice chords, re-sound design.

- Test against references and your audience platform (TikTok hook window vs Spotify long play).

The “35-year rule” and the “80/20 rule” in songwriting—how AI changes them

These “rules” are more folk wisdom than physics, but they’re helpful lenses.

- 35-year rule in music (generational cycles)

- Styles often come back when a new generation recontextualizes them. AI will accelerate this by making it easier to explore past palettes quickly—but the standout tracks will still be the ones that add a new twist.

- 80/20 in songwriting

- Often, 20% of the song (the hook, title line, core groove) drives 80% of the impact. AI can help generate many hook candidates, but humans still win at picking the one that matches a real emotion and a real audience moment.

Where Freebeat AI fits: the future isn’t just making songs—it’s making music-driven content

A big shift is that “helping with making music” increasingly includes helping you ship the music. Today, creators don’t just need audio; they need assets: visuals, teasers, lyric videos, performance clips, and story-driven edits that match the track’s energy.

This is where Freebeat AI’s specialization matters. Because Freebeat is built for audio-reactive video generation, it understands song structure—BPM, beats, bars, drops, sections—and uses that to drive camera motion, transitions, and pacing across a full video. In my experience, this is the difference between visuals that merely “move” and visuals that feel edited to the music.

Ways creators use Freebeat-style systems to extend music output:

- Turn one finished song into multiple platform-native videos

- storytelling cut, stage-performance cut, lyric cut.

- Maintain a consistent persona using custom AI avatars and reusable visual identities.

- Reduce the “post-production tax”

- fewer manual keyframes, fewer missed beats, faster iteration.

5 Best AI Music Production Tools in 2026 (Logic Pro, Moises & More)

So, will AI get better at helping with making music?

Yes—AI will get better at helping with making music in the ways musicians actually feel: faster ideation, cleaner production, stronger structure awareness, and tighter integration into release workflows. But the best future isn’t “AI replaces musicians.” It’s “AI removes friction,” while humans keep authorship, taste, and meaning.

If you’re building a creator pipeline today, the winning move is to pair AI-assisted music creation with music-aware video output—so every track can launch with visuals that hit on-beat and stay on-brand.

📌 master ai music creation how to generate beats and videos seamlessly

FAQ

1) Will AI get better at helping with making music, or is it already peaked?

It will get better—especially at control (style constraints), structure awareness (sections/drops), and production tasks like separation and cleanup.

2) Is AI good for music production?

Yes, for drafting ideas and speeding up technical steps. It’s strongest when you treat it like an assistant and keep final taste decisions human.

3) Is it illegal to make a song with AI?

Usually not illegal by default, but rights and licensing depend on training data, the tool’s terms, and how you use the output. Copyrightability can be limited for purely AI-made tracks.

4) What is the 30% rule for AI in music?

A practical approach: use AI for the first 30% (options and scaffolds) and rely on human editing for the remaining 70% where originality and identity are created.

5) What is the 80/20 rule in songwriting, and can AI help?

Often 20% of the song (hook/title/groove) creates 80% of the result. AI can generate many hook candidates quickly; humans choose the one that feels true.

6) What is the 35 year rule in music?

It’s a common idea that trends recycle across generations. AI may speed up revivals by making older styles easier to explore and remix, but standout work still needs a new angle.

7) Why do many AI projects fail, and what does that mean for music tools?

A major reason is poor or irrelevant data and mismatched goals. For creators, this means choosing tools with clear provenance, strong controls, and workflows that fit real production needs.